The Realities Of Building A B2B Chatbot

This Q&A explores the realities of building a B2B chatbot from the perspectives of the copy and code team.

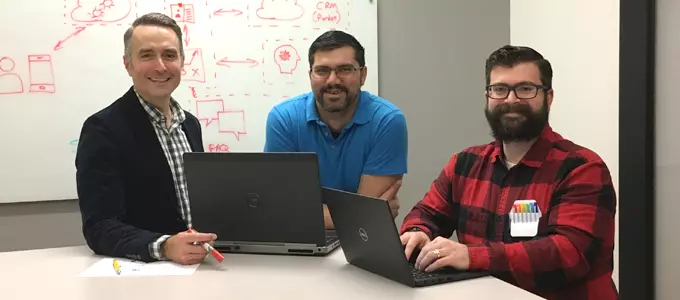

Building a chatbot and searching for more than another 101 Guide? This Q&A with a developer, writer and self-proclaimed “chatbot wrangler” offers practical advice from real-world experience. Godfrey chatbot creators Andy Hunt (executive creative director, Digital), David Ali (senior. software engineer) and Matthew Kabik (copywriter) share their perspectives on what it takes to create a successful chatbot and the lessons they learned along the way. (For a primer on chatbots, check out How to Build a Better Chatbot.)

To get started, tell us about the chatbot project and your role.

Andy: We developed the chatbot as part of a new product launch for one of our clients. The product technology was positioned as so advanced “it must be from the future.” The objective was to provide an experience where a user could interact with this high-tech product in a very futuristic way—by chatting with it as if it was a sentient being. The bot is the voice of the product. It can answer FAQs about its features, technology, specs—and even tell jokes. My role was as the strategist, tech consultant, project manager and bot trainer. I defined the scope, ran daily huddles and accuracy tests and tried to keep everyone collaborating.

David: I implemented the code and set up the services to create the chatbot. It’s comprised of an interface, an AI decision engine and knowledge repository. The chatbot’s purpose was not only to inform people about the new product but also to capture leads.

Matthew: I was the writer and voice of the bot. I took the client’s raw content and crafted dialogues in a style that was appropriate for the bot’s personality and the company brand. I categorized the content into intents and entities, added question and answer variances, tested and refined how the bot responded.

You mentioned some of the technologies involved in the chatbot’s creation. What specific tools and services did the team use to plan, manage and execute the project?

Andy: We used Microsoft Cognitive Services for our artificial intelligence (AI) and natural language processing (NLP). The bot was coded in C# using the Microsoft Bot Framework and is hosted by Microsoft Azure Services. We used Twilio as the SMS processing service, Pardot for lead collection/marketing automation, and dashbot.io for analytics.

David: The client didn’t dictate the technology solution; we were able to identify the needs and determine what would work best. The Microsoft Bot Framework provided the basic coding blocks to help assemble the bot. It allows users to send information to the bot, routes it to different dialogs, sends the response back and keeps track of the conversations. Microsoft’s LUIS was the decision engine used to determine user intent, grade and weigh questions. The QnA Maker was the repository for the question and answer sets.

Matthew: To manage content with the client, we used Excel as the document go-between to keep track of question and answer sets and categories. It was easiest for the client because they had a large team weighing in on the text. The content is still growing, but at last count we had over 4,000 questions.

This was your first Q&A chatbot—and the client’s. What went well and what didn’t?

Andy: We allotted more time for project discovery, but even with more planning, we didn’t give ourselves enough time to construct the dialogs. We didn’t understand the work required to structure the content or just how much content we would have to create for the bot to be of value to users. It takes a mix of subject matter knowledge, linguistics and psychology to build out an organized content set for use with AI.

We also didn’t plan enough testing time. AI needs additional steps for training post-testing. After each testing period, we added more content, so the subsequent testing and training periods kept growing. In the end, everyone stepped up to work extra hours and devote the time to get the job done, but it seemed never-ending. We didn’t panic—even during the most stressful periods, everyone kept their heads.

Matthew: I agree that we underestimated the time to complete the content and testing tasks. I also feel we oversold what was possible without knowing, for sure, whether we could pay off our promises. We now have a strong understanding of what AI and the technologies are capable of, and we understand the roles and many rounds necessary for chatbot work.

David: What went well was having one person who was the content aggregator and owner. Matt actually became the bot, which was key to creating a consistent experience. We also had good collaboration on ideas from a solid team.

What advice would you give someone who has never done a project like this before?

Andy: Make it a priority to gather as much content before populating any AI knowledge base. Take the time to organize it to understand the topics, intents, entities and phrases, especially if it’s a jargon-filled knowledge base. Secondly, plan for an ongoing, regular analytics program. There is so much intel to be gathered from analyzing what people are saying to the bot and what their intents are.

David: Plan out the dialog flow with UX, content and development to provide a smooth development path. This helps identify how the user should be routed after a response has been delivered and the type of response the bot should be expecting and return. We mapped out not only the UI conversations but the information routing behind the scenes (for lead gen and marketing automation).

Matthew: Set very specific expectations. For example, “The bot will have X amount of question and answer pairs. This is how the bot will work. This is what you can and shouldn’t expect.” Otherwise, scope creep is endless.

Andy: Yes, be honest with your client or internal teams. AI can be very complicated despite the hype and vendors that seem to make it so simple. It is truly a multidisciplinary effort. Make sure you have the right people with the time to help.

In your opinion, when does a chatbot make sense? What should a company consider before deciding to build a chatbot?

Andy: If a company or a team is looking to dip their toes into the waters of AI, a simple chatbot makes a lot of sense. It’s easy to go off into the deep end with AI, but getting started with a basic bot is easier because there are lots of tools and libraries available. But just because it’s readily available, I wouldn’t advise doing a chatbot as a singular tactic. It should have a solid purpose or be part of a larger campaign.

Matthew: A chatbot can help automate a solution for user interactions that don’t follow a normal flow. A Q&A chatbot like ours makes sense as an interactive way for users to learn about a product or service. It’s also a great way to gather information for customer service problems and generate leads. It’s not useful if one expects it to replace human-to-human interaction or for the bot to “learn” everything about a subject. Bots are only as smart as what they are told to know.

What is the one thing you know now that you wish you had known at the beginning of the project?

David: I would put more emphasis on planning upfront—that is my biggest takeaway. It helps to get everyone on the same page and sets clear project direction. We changed directions a few different times, and I had to recode things. Specific to our Q&A bot, we also learned we needed the FAQs to be broken out into more specific content repositories to help the service determine the best matching response.

Andy: You will not hit 100% accuracy with your bot’s answers. The TV commercials make it sound like AI is right every time. Even the AI from Google, Microsoft, etc., is not perfect. Realize that you might only get 80% accuracy (which is about industry average). This helps set the expectation at the outset.

Matthew: (yawning) I wish I had known the time commitment.

Any parting thoughts?

Andy: Just try it. We didn’t let the fact that we hadn’t done it before stop or slow us down. There are lots of tools out there to get started. You can quickly spin something up that will give you a flavor for the basics. BUT if you decide to keep going, make sure you do it with a real plan in place. It’s way too easy to go down a rabbit hole and not know how to get out. Be prepared to have fun!

Sign Up for our Newsletter - Get agency updates, industry trends and valuable resources delivered directly to you.

Godfrey Team

Godfrey helps complex B2B industries tell their stories in ways that delight their customers.