Can Artificial Intelligence Make Your B2B Content Smarter? (Part 2)

See how A.I. machine learning can (and cannot) make your B2B marketing and social content 75% more effective.

Yes, it's true: Artificial Intelligence (A.I.) has already evolved to be able to write effective email and social media content. Depending on who you believe, content produced by an A.I. function known as natural language generation (NLG) is 75% "better" than what a human alone can create.

The scare quotes around "better" are there for a purpose.

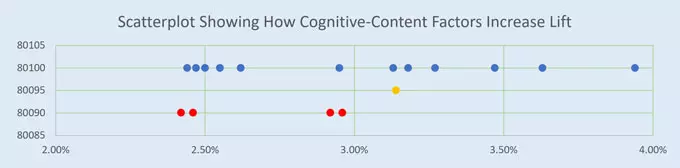

In marketing, increased effectiveness can be measured in terms of "lift." If a smart human being writes a social post that boosts the baseline content's click-through-rate (CTR) from 3% to 4.5%, then there is a 50% increase (technically known as "lift"). If an A.I. writes a version with a 5.25% CTR, then the lift is 75%. By comparison, the A.I. version's lift is 75% "better" than the baseline.

CAN A.I. REALLY CREATE BETTER CONTENT THAN A SMART HUMAN?

But let's look more closely. To feel confident that the difference between the A.I.'s and smart human's CTR is scientifically valid, an A/B test must be done. Each version must be viewed by 13,000 people.

Altogether, about 26,000 samples are needed to make a confident comparison, a number beyond many B2B media channels.

However, those numbers are a drop in the bucket for business-to-consumer (B2C) campaigns, which can reach millions.

Today's NLG programs write B2C content, not B2B. Here's one way A.I. programmers teach NLG to write effective email subject lines:

1. Hundreds of thousands of emails are uploaded into a machine-learning (ML) program that performs a process known as "unsupervised learning." The ML program sifts through the emails, correlating subject line words with email open rates, clicks and conversions.

2. This list of "power words" is saved in a database. Then, an NLG engine uses that power-word vocabulary to engineer human-readable content. No focus groups, consumer behavior or neuromarketing studies are needed; NLG automatically selects the words and grammar to create thousands of message permutations.

3. An ML classifier ranks those permutations by meaningful categories--such as customer persona types or positive/negative emotion levels. Finally, each subject line is given a score for predicted effectiveness. Actual results are fed back into the ML program to continuously improve the prediction model.

TAKE THE GUESSWORK OUT OF CREATING EFFECTIVE CONTENT

By comparison, human beings can only make an "educated guess" about what makes content more effective. For example, how do you know which of these subject lines will work best?

- Don't miss out! Free Shipping on your first order!

- Beat the Summer heat with Free Shipping!

- LAST CHANCE! FREE SHIPPING

Of course, it's impossible to tell. Why? Because people instinctively know that context is critical for meaningful communications. To answer the big effectiveness question, a lot of smaller questions must be answered, such as:

1. Is Shipping the most effective matter of concern?

2. Are ! and CAPS the most effective formatting?

3. Are last chance and don't miss out triggering the most effective emotions?

Some of these questions can be answered by A/B testing.

Others need to be answered by account planners and content media strategists who understand that various members of a B2B group decision-making team have different values.

HOW HUMANS CAN USE A.I. AND MACHINE LEARNING TO CREATE CONTENT

Discerning context is very, very difficult for A.I. For example, in spite of 130 million miles of testing, a Tesla car's autopilot system mistook a white tractor trailer for clear sky, causing an accident. Mistaken contextual cues are one reason why Tesla tells drivers to "maintain control and responsibility for your vehicle"—be prepared to take over at any time.

In training A.I. for use in B2B marketing communications, it helps to narrow the context by focusing on:

- Creating short-form content under 100 words in email, LinkedIn, Twitter, Google AdWords and other succinct formats

- Using concrete feature-benefit-advantage language, not branding metaphors

Because context, language and cognitive impact are so intertwined, Godfrey is exploring simple ways to help human content creators:

- Use communication principles that have been tested for effectiveness rather than go by guesswork

- Employ ML tools to identify cognitive-content factors that boost lift, signal intent and motivate B2B buying decisions

- Combine human creativity and ML objectivity to navigate the complex B2B customer journey—and avoid A.I. accidents

See our roadmap for smarter B2B: "The Future of B2B Marketing is Fueled by Smarter Data."

Sign Up for our Newsletter - Get agency updates, industry trends and valuable resources delivered directly to you.

Godfrey Team

Godfrey helps complex B2B industries tell their stories in ways that delight their customers.